Digital disinformation has become one of the main reputational risks for companies and executives, powered by artificial intelligence and algorithmic amplification systems.

The Online manipulation is the strategic use of false or distorted content to influence public perception, alter narratives, and affect the digital reputation of individuals and organizations.

Today, a well-placed false narrative can have more influence than official communication , affecting decisions, business relationships, and public perception in a matter of hours.

The connection between fake news and online reputation has become a strategic issue that transcends corporate communication and enters fully into the realm of corporate governance.

How will online manipulation work in 2025?

In 2025, the European Union strengthened its regulatory response to information manipulation with the implementation of the Digital Services Act (DSA) , which imposes due diligence obligations on platforms to mitigate systemic risks, including disinformation.

What is the current architecture of disinformation?

‘s fake news doesn’t follow the rudimentary pattern of the past. It operates under hybrid logics that combine digital optimization, emotional impact, and strategic storytelling .

Algorithmic manipulation and negative positioning

Disinformation can be designed to solidify harmful associations in search engines. When certain negative keywords are systematically linked to a name or brand, adverse reputation indexing occurs.

Fake news doesn’t need to be credible: it needs to be repeated and well-positioned.

Stanford‘s AI Index Report warns that the acceleration of AI is multiplying exposure to synthetic content, reinforcing the need to build more verifiable and resilient information environments.

What role does artificial intelligence play in disinformation?

The growth of AI-generated content adds an additional level of complexity.

In 2025, the NIST published a key technical guide on safe practices in generative and foundational models.

This reinforces the idea that traceability and content governance become critical to reducing the risk of manipulation.

Why do fake news directly affect digital reputation?

The reputational damage resulting from a false narrative can have tangible consequences: suspension of negotiations, loss of trust, institutional deterioration, and increased scrutiny.

In this new scenario, reputational protection is no longer reactive but requires a structured and proactive approach. One example is how organizations are applying combined positioning and storytelling approaches in services such as digital PR.

Furthermore, when misinformation persistently ranks in search results —associating negative terms with a name or brand— the reaction must be technical and strategic.

In these cases, adopting comprehensive risk analysis approaches makes it possible to identify escalation patterns and anticipate potential reputational damage scenarios.

Based on this analysis, it is possible to apply countermeasures that progressively displace harmful narratives through verified, structured content reinforced by digital authority signals.

And when false content infringes rights or is improperly kept visible, strategies such as those described in the right to be forgotten: strategy for deletion can be applied.

How to defend your reputation against misleading content?

Prevention, monitoring and strategic response.

Professional defense against online manipulation rests on three pillars: auditing, monitoring, and authoritative narrative response.

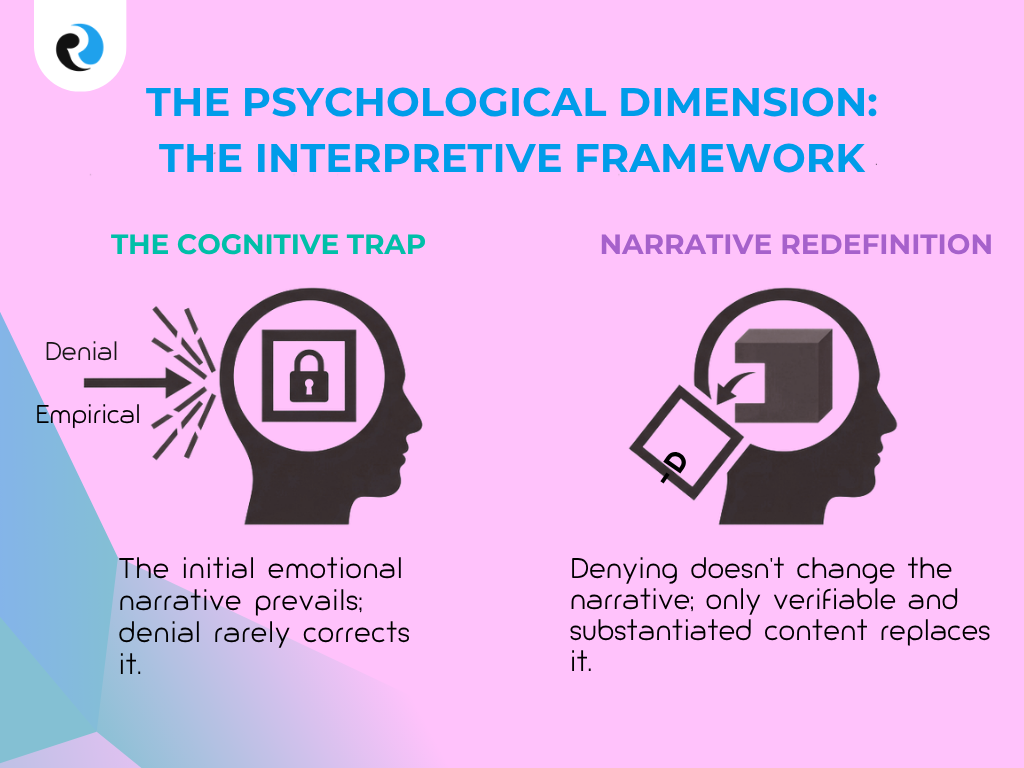

Monitoring is not limited to social media. In some cases, a campaign is prepared or amplified from less visible spaces, making it relevant to incorporate advanced surveillance capabilities such as those described in ReputationUP’s radar tool for monitoring the dark web.

Psychological dimension of disinformation

The problem isn’t just technical: it’s cognitive . A false narrative with emotional weight tends to quickly take root in public perception, even after a correction.

Therefore, the response cannot be limited to simply denying the claim. It must redefine the dominant interpretive framework through verifiable, coherent, and sustained content.

Conclusion

Disinformation is not just a problem of content, but of perception. In an environment where artificial intelligence amplifies narratives and algorithms prioritize relevance over truth, digital reputation becomes a vulnerable asset.

Information manipulation is no longer an isolated risk, but a structural threat that demands a professional approach based on technology, narrative strategy, and normative understanding.

Today, the difference lies not in avoiding a crisis, but in detecting and neutralizing a false narrative before it takes hold.

Because in today’s digital environment, the truth does not always prevail on its own : what prevails is what manages to position itself, be repeated, and be interpreted as credible by algorithms and audiences.

Frequently Asked Questions (FAQ)

Yes. Today, many campaigns rely on coordinated networks, concise content, and micro-communities. Apparent credibility can be built through repetition and distribution, not through journalistic authority.

Repetitive time patterns, newly created accounts, very similar messages, and a concentration of specific terms designed to position a negative association.

Because the first narrative often sets the mental framework. The strategy should replace the dominant narrative with verifiable content and accumulated authority, not just with negation.

When content infringes rights, contains demonstrably false information, or affects security, combining legal intervention and narrative repositioning is often more effective.

Due to changes in visible results, a drop in negative associations, a reduction in coordinated mentions, and greater consistency of the narrative in search engines and AI systems.